A common mistake when analyzing network speed/bandwidth between different applications and servers is to fully rely on the mere size of the files being transferred. In fact, one big file will transfer much faster than thousands of small files that have the same accumulated size. This depends on the overhead of reading/writing these files, building TCP/IP sessions, scanning them for viruses, etc. Furthermore, it is application dependent.

I built a small lab with an FTP server, switch, firewall, and an FTP client in which I played a bit with different file sizes. In this blog post I am showing the measured transfer times and some Wireshark graphs.

Lab

This simple lab consists of the following components:

- Notebook, Windows 7, FileZilla FTP Server

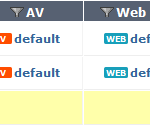

- Fortinet FortiGate Firewall (FortiWiFi 90D version 5.2.5) with activated “next-gen” features such as AntiVirus and Application Control. Furthermore, a destination NAT (Virtual IP) was used for the FTP server.

- Dell Switch, unmanaged, 1 GBit/s

- Notebook, Windows 7, FileZilla FTP Client

- The lab was IPv4-only. The cabling was the following:

FTP server <-> FortiGate <-> switch <-> FTP client - The Wireshark graphs were generated with Wireshark version 2.0.1.

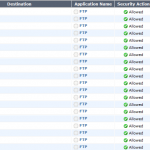

I tested the following scenarios:

- One single file with 100 MBytes (generated with the Dummy File Creator)

- 100 files with each 1 MByte, which is 100 MBytes in summary (generated with the Windows fsutil tool)

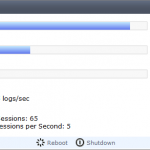

That is, in every case, I transferred 100 MBytes but with 1 vs. 100 files in summary. Here are some screenshots of the file properties as well as the FortiGate settings and CPU usage during the tests:

Results

These are the measured transfer times:

- 1 big file: 15,2 seconds = 52,6 MBit/s

- 100 small files: 37,7 seconds = 21,2 MBit/s

And, more interesting, the corresponding IO graph from Wireshark which shows the transfer of both tests. The first block is the big file while the second block shows the large variance caused by the many small files:

The Wireshark Conversations list shows the TCP connections for both tests which reveal that the total amount of bytes for the first test was 109 M (yellow) while every small file took about 1130 k (green):

Finally, the Time Sequence (tcptrace) for the first 100 M file shows an almost perfect rise (with one exception, first three images) while three sample graphs of some small files show the variance of the transfers:

Conclusion

It depends! It depends on many factors whether a file transfer over the “fast layer 2 / layer 3 infrastructure” is in fact fast or not. If FTP is used for many backup jobs, a few firewalls are in between, and the streams are in parallel with many small files, the network throughput might decrease dramatically.

Featured image “Mini Smart” by Günter Hentschel is licensed under CC BY-ND 2.0.

Great work. I appreciate your work on Fortigate. I have two 500Ds. One for test one for production. One is running 5.4. I’m switching to a new 1Gbps internet connection and I’m seeing massive difference in SSL VPN vs IPsec thoughput. The reasons for this are fairly obvious (TCP overhead with SSL), but wondering if you see the same. I tend to see SSLVPN perform ~75% slower than IPsec connections. I’m using the FortiClient 5.4.0.493 to make the connections.

Danke

Hi Dave.

I have not yet tested the throughput differences with SSL vs. IPsec. However, I fully believe that there are differences. I am not sure whether SSL VPN en/decryption is offloaded to NP processors, while IPsec en/decryption might. Maybe this is the reason.