This is a step-by-step tutorial for configuring a high availability cluster (active-standby) with two FortiGate firewalls. Since almost all firewall vendors have different principles for their HA cluster, I am also showing a common network scenario for Fortinet.

I am using two FortiWiFi 90D firewalls with software version v5.2.5,build701.

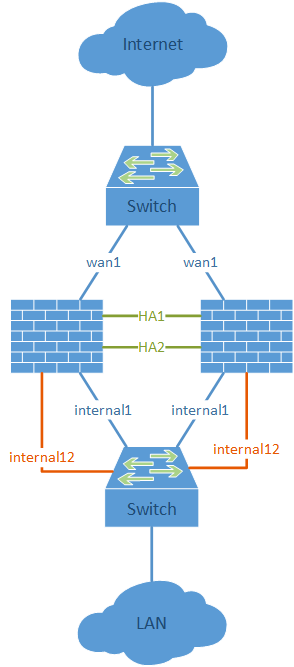

Network Layout

Basically, all interfaces must be connected with layer-2 switches among both firewalls. (In my lab, these are the wan1 and internal1 ports.) Furthermore, two directly connected interfaces should be used for the HA heartbeats. If the firewall has no dedicated HA interfaces, any unused interfaces can be used instead. (In my lab, I am using ports internal13 and internal14 for the heartbeats on my FortiWiFi-90D firewalls.)

Basically, all interfaces must be connected with layer-2 switches among both firewalls. (In my lab, these are the wan1 and internal1 ports.) Furthermore, two directly connected interfaces should be used for the HA heartbeats. If the firewall has no dedicated HA interfaces, any unused interfaces can be used instead. (In my lab, I am using ports internal13 and internal14 for the heartbeats on my FortiWiFi-90D firewalls.)

The crucial point is the out-of-band management for accessing both firewalls independent of their HA state. Fortinet has the feature of the “Management Port for Cluster Member“, which must be set during the initial HA process. This interface must be unused to that point and can be configured later with an IP address within the same IP subnet as an already used interface. (In my lab, I am using the internal12 ports for the management ports.)

Screenshot Guide

Note: Before cabling the HA cluster, you should configure both units and then power off (!) the secondary one. Then connect the HA heartbeat interfaces and power on the secondary unit again. This ensures that the primary unit will stay the primary (since it has the longer uptime) and syncs its configuration to the secondary one.

Following are the screenshots for this HA cluster guide. Note the descriptions under each screenshot:

The following two pictures show the physical units after the HA configuration. On the first picture, the HA cluster was not cabled, while on the second, it was. Note the green HA LED:

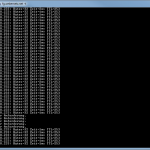

Via the CLI, the diagnose ha sys status or the get system ha status commands can be used to investigate the cluster:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 |

fd-wv-fw04b $ diagnose sys ha status HA information Statistics traffic.local = s:0 p:18860 b:1708434 traffic.total = s:0 p:19031 b:1726842 activity.fdb = c:0 q:0 Model=90, Mode=2 Group=0 Debug=0 nvcluster=1, ses_pickup=1, delay=0 HA group member information: is_manage_master=0. FWF90D3Z13005629, 1. Slave:128 fd-wv-fw04b FWF90D3Z13006159, 0. Master:128 fd-wv-fw04 vcluster 1, state=standby, master_ip=169.254.0.1, master_id=0: FWF90D3Z13005629, 1. Slave:128 fd-wv-fw04b(prio=1, rev=0) FWF90D3Z13006159, 0. Master:128 fd-wv-fw04(prio=0, rev=255) |

A quite cool feature is the possibility to manage any other firewall within the cluster from any device with: (You must first find out the device-index via “?”)

|

1 2 |

execute ha manage ? execute ha manage <device-index> |

If you want to test a failover you must manually decrease the priority of the current master (remember: higher priority wins):

|

1 |

execute ha set-priority <serial-number> <new-priority> |

Featured image <Untitled> by daspunkt is licensed under CC BY 2.0.

Hi,

What IP address do you use on the slave unit for the wan & internal interfaces? Do you leave them blank?

Hi Ed,

I don’t use any further IP addresses on the other links. Only the ones explained for the management.

The data interfaces still have only their single IP address which floats between the deviced, dependent which of them is currently active.

It’s use the same IP Address as Master. Just leave slave there it’s will syncing by automatically

Hi,

Your firewall is in HA mode, but a single switch is the point of failure. My question is how connect/configure two firewall(HA) to two switch/coreswitch ? I can do it with stacking/chassis technology to make two physical switch look like 1 switch, but stacking/technology is a single control plane which mean if i upgrade the switch i must reboot the two switch, theres is another technology M-LAG/MC-LAG, it provide active-active link it survive upgrade because its seperate control plane but the switch is not a ‘single logical switch’. Whats your advice about firewall ha to two switch ?

Thx

Hi Ibrahim,

yes, you are correct that the switch (e.g., the one facing to the Internet) is a single point of failure. You should you two switches, both connected to each other and both (!) connected to the ISP router, or the like.

Then you are conneting the “left” firewall to the “left” switch, and the “right” firewall to the “right” switch. Now, if a switch is dead, the firewall cluster will change its active/passive state and will work with the other switch.

Hi Johannes,

nice information for HA cluster with Fortigate.

The recommendation from Fortinet is to give the different units different values of priority. You use the default value of 128 in your example.

With my experience (four HA custers at several locations) I agree the recommendation and more, I change 128 on both units.

With this we never had a problem when we had to change a unit because of hardware problems.

If you run several vDoms, you can configure which vDom runs on which unit. That’s very nice to use the power of all units in a active-passive cluster…

hi, can i directly from forigate to isp in different interface and different isp?

??? Please explain your question in more detail. I don’t understand it.

No, you can’t.

I am in the process of setting up 2 FortiGate 500D units in HA active-passive. Can the passive unit host other tunnels while it’s waiting for the primary unit to develop problems? The OS is 5.4.0 Build 1011

Hi Paul,

no, the passive unit is really “passive”, that is, it does nothing until it becomes active. You can only configure/troubleshoot on the current active unit.

Hi

Are there fortigate fir Wan use the same ip address??

And for Lan (internal) too same the ip address?

Hi Erlianto,

the IP addresses used on all data interfaces are exactly the same. But of course only active on the active unit. If a failover occurs, the IP addresses will be active on the other (formerly passive) unit.

The only exception are the IP addresses on the port configured as “Reserve Management Port for Cluster Member” as shown in my screenshots above.

Hi Johannes,

Great post,

Why in the passive unit you only check one of the wan port and no both in the monitor ports section of HA configuracion?

Uh, good question. This was ONLY related to my lab since I had no wan2 cable connected on the passive unit. ;) If you have all your ports connected on both units, than you should monitor all of them as well. And of course on both units.

Sorry for that.

Johannes,

Great write up, thank you for that.

I’m new to the FortiGate world & have taken over administering two 200D firewalls configured with HA (but they are not at all correct).

I have a couple questions.

What I really want to do is move the firewalls into separate buildings.

If I create a VLAN specific for the heartbeat interfaces and make sure it’s in each building, will that work?

I will also have an “Internet” VLAN that each firewall will use to connect to the internet.

The same will be true with the internal LAN VLAN(s).

Any reason why this config won’t work?

Next wrinkle: Adding SD-WAN to both of these with multiple Internet connections.

Any problems here?

Thanks Bro.

Hey Jeff.

1) HA via VLANs in different buldings? -> Will work, since the FortiGate Clustering Protocol (FGCP) runs over TCP/IP. No problem with VLANs here. No “direct calbes” needed between two FortiGates.

2) Multiple VLANs/whatever-interfaces? -> I don’t see any problems here. That’s a quite common scenario.

3) Uh, I am not quite sure since I personally have not yet worked with the SD-WAN options on a FortiGate. Sorry. However, I would expect it to work.

Cheers,

Johannes

TYVM!

Hi Johannes,

thanks for the writeup, just seeking clarification again.

after configuring the Backup HA, do we need to still add the IP addresses for the WAN and LAN on the BAckup ?

No, they are synced between the devices.

Hi Johannes,

Just wondering is there a similar setup possible with 2 FGTs for HA, and failover over 2 WAN lSP links?

Thanks

Hey GBrennan.

“ISP failover” is not like “HA setup”. You have to configure routes with failover conditions, appropriate NAT rules, etc.

Currently I don’t have such a guide on my blog. I am sorry.

Johannes

Nice blog johannes

Hi Johannes

On your scenario, what will happen if by any chance, both of the HA Link is Down?

Will both of the unit acting as the master unit causing IP conflict thus disrupt the live traffic?

Hey Deni. Yes, if both HA links are down, you’ll have a split brain situation. Ref: https://help.fortinet.com/fos50hlp/54/Content/FortiOS/fortigate-high-availability-52/HA_failoverHeartbeat.htm

Hi, thanks for the scenario.i have a question: i have two fgt active passive and they are in sync. I have two remote router s from where two separate tunnels coming to mg active unit external interface. When i fail my active passive takes over but the tunnel does not come up. Everything else is in sync.

Hi Mandeep.

Uh, so everything else is working? That is: your external interface with all outgoing/incoming connections and so on? It’s only those 2 VPN tunnels that are not working after a cluster failover? Are there other working VPN tunnels after the failover? Is there an HA option that enables some kind of “sync VPN tunnels” thing? Have a look at the CLI for the HA setup. There are lots of more options in the CLI than on the GUI. If this doesn’t help, I don’t think I can help you furthermore. Maybe you should open a support ticket at Fortinet?

Please note that Fortinet has lots of bugs in its FortiOS version. They even differ from model to model. So it’s not unlikely that you’re running into a bug right now. ;)

Cheers

Johannes

Hi Johannes,

Nice article, I am facing issue,

setup is same like you little changes,

where on external (WAN) my setup looks like same as you

on LAN (internal) instead of internal1 and internal 2 i am having only one cable connecting to LAN switch (where one VLAN configured for 2 ports with same IP)

if we shutdown WAN1 from Primary HA works and all traffic will flows via secondary

on WAN side it is working perfect.

But on LAN side if we shutdown internal port traffic won’t flow to secondary and everything on hang.

questions:

can you pls share on LAN side switch what configuration did you done?

in firewall any particular parameter need to check